The Deployment Layer Is Where Enterprise AI Is Won

Foundation model labs are finally admitting something that took years of hard lessons to learn in the field: the smartest model does not win enterprise. Trust does.

In the span of twelve hours, two of the most prominent AI labs in the world made announcements that confirmed this reality. Anthropic announced a $1.5 billion services arm backed by Blackstone and Goldman Sachs. Hours earlier, Bloomberg reported that OpenAI was structuring a $10 billion version of the same idea with TPG and Bain Capital. These are not product announcements. They are structural bets, billions of dollars placed not on better models, but on the layer that wraps around models and makes them actually work inside enterprise organizations.

The Real Bottleneck Is Not Capability

For years, the AI conversation in enterprise circles has been dominated by benchmark comparisons and capability leapfrogging. Which model scores highest on reasoning tasks? Which one writes better code? Which one handles longer context windows?

Those questions matter, but they are no longer the bottleneck.

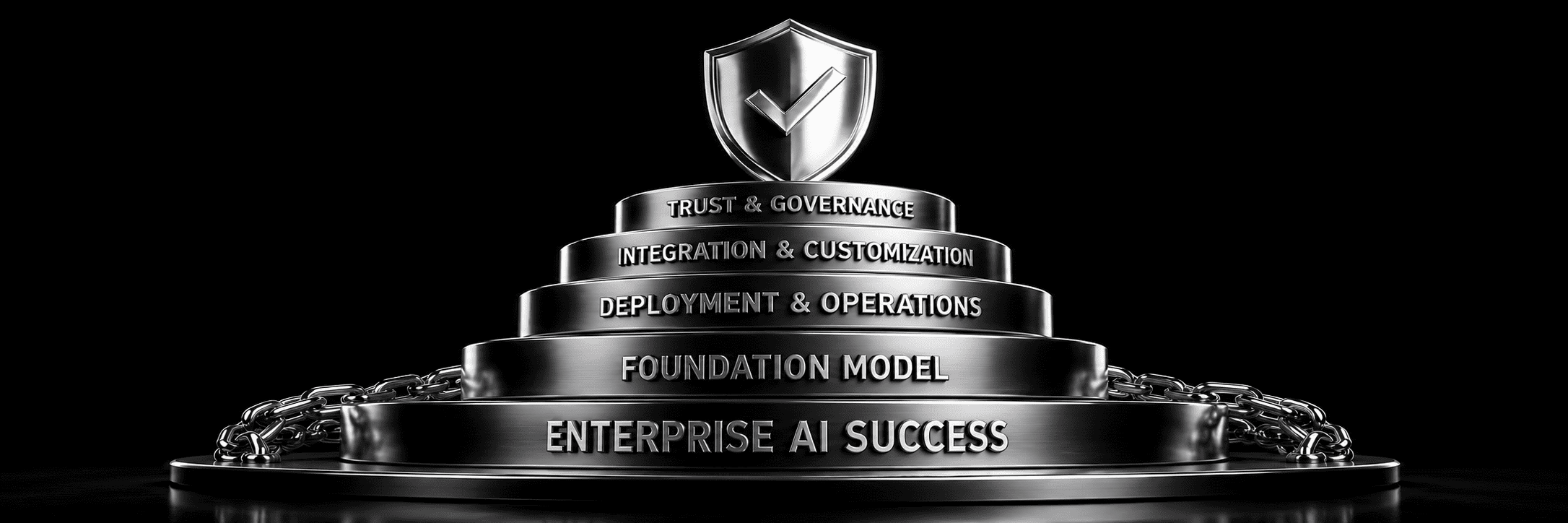

The bottleneck today is everything that happens after a model is selected. Change management. Onboarding. Customization to fit specific workflows. Integration with existing systems. Governance frameworks that satisfy legal, compliance, and security requirements. Evaluation pipelines tied to the customer's actual use cases, not generic benchmarks.

Both Anthropic and OpenAI just structured billions of dollars of services capacity to attack exactly these problems. That is a significant signal. When frontier labs pivot resources toward deployment infrastructure rather than pure research output, it tells you where the hard problem actually lives.

What Seven Years of Enterprise AI Sales Taught Me

Selling AI to Fortune 500 companies and government agencies is a humbling education. The best product does not naturally win. It does not matter how impressive a demo is if the solution cannot be landed cleanly inside a customer's workflow without breaking their governance requirements.

The work that actually closes deals and retains customers is unglamorous: meticulous onboarding, careful configuration, deep integration work, governance documentation, and evaluation frameworks built around what the customer actually measures, not what the vendor wants to showcase.

This is not a new insight. It is the same lesson that enterprise software companies learned in the 1990s and 2000s. The difference now is that AI capabilities are advancing so rapidly that many vendors assumed the technology would sell itself. It does not. The deployment layer has always been where the real work happens.

The PE Firms Are Backing the Right Layer

Private equity firms are not sentimental about technology. They follow the money, and right now the money is following the deployment layer.

Blackstone, Goldman, TPG, and Bain are not investing in model research. They are investing in the services infrastructure that sits between a foundation model and a working enterprise deployment. That infrastructure includes professional services, implementation teams, integration tooling, compliance frameworks, and ongoing support structures.

This is the layer where budget actually flows in enterprise deals. A company might pay for API access to a foundation model, but the larger line items — the ones that justify multi-year contracts — are almost always in deployment, customization, and governance.

What This Means for Enterprise AI Buyers

If you are evaluating AI vendors today, the question has fundamentally shifted. It is no longer "which model is the smartest?" It is "who can actually land this solution inside our workflow without breaking our governance requirements?"

The vendors who can answer that second question — with evidence, with references, with a credible implementation methodology — are the ones who will win the next decade of enterprise AI spend. The model layer is commoditizing. Capability gaps between frontier models are narrowing. The differentiation is moving up the stack, into deployment, integration, and trust.

The labs that just announced billion-dollar services arms already know this. The question is whether the rest of the market catches up.

Conclusion

The announcements from Anthropic and OpenAI in a single twelve-hour window were not coincidental. They reflect a shared recognition that frontier capability is no longer the primary competitive variable in enterprise AI. The deployment layer, the unglamorous, operationally intensive work of making AI actually function inside real organizations, is where the next wave of value will be created and captured.

For enterprise buyers, this is clarifying. For vendors, it is a call to invest in the capabilities that actually close deals and retain customers. The model layer is a commodity. The deployment layer is the business.

.png)

.svg)