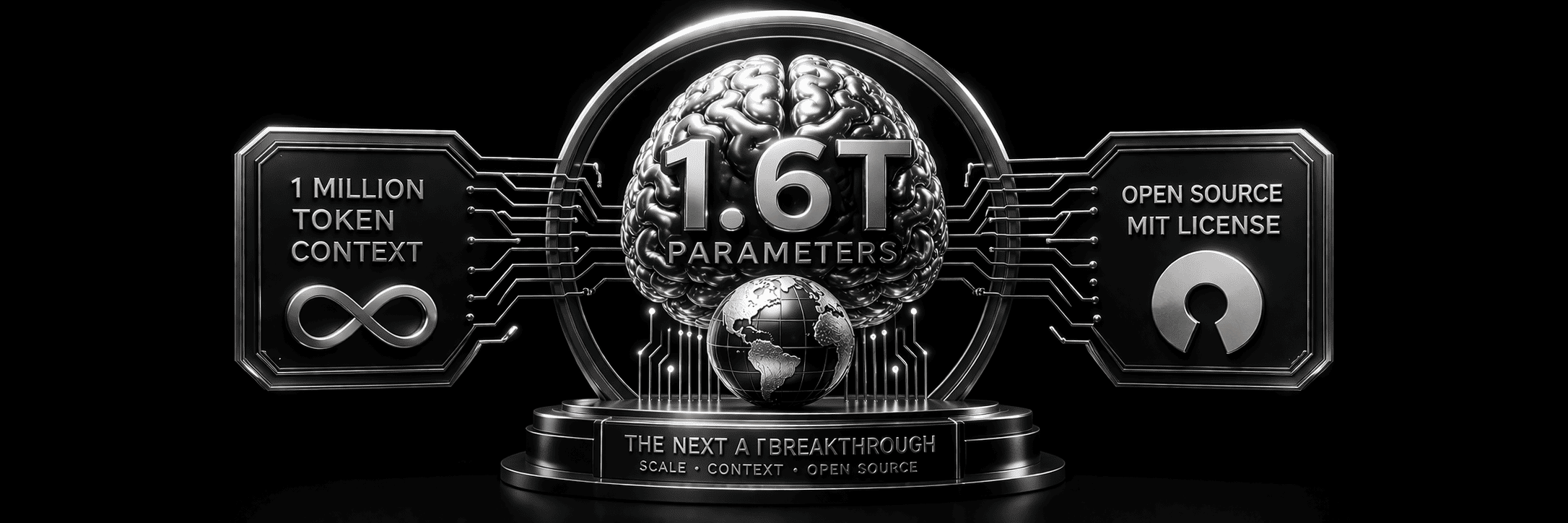

What the Next AI Breakthrough Looks Like: Scale, Context, and Open Source

As we navigate the rapidly evolving landscape of artificial intelligence, it’s worth pausing to envision what the next major breakthrough might look like. While today’s AI models continue to push boundaries, the next “DeepSeek moment” could fundamentally reshape what’s possible in machine learning.

The Scale of Tomorrow: 1.6 Trillion Parameters

The progression of AI model size has followed a remarkable trajectory over the past few years. From GPT-3’s 175 billion parameters to GPT-4’s estimated trillion-plus parameters, we’ve witnessed exponential growth in model complexity. By 2026, we could be looking at models with 1.6 trillion parameters — a scale that would dwarf even today’s most sophisticated language models.

This dramatic increase in parameter count isn’t just about bigger numbers; it represents a fundamental expansion in a model’s capacity to understand, reason, and generate content. With 1.6 trillion parameters, AI systems could capture more nuanced patterns in data, understand more complex relationships between concepts, and generate more sophisticated, contextually appropriate responses.

The implications of such scale extend beyond raw computational power. Larger parameter counts typically translate to better performance across diverse tasks, improved few-shot learning capabilities, and a stronger ability to handle edge cases and complex reasoning scenarios. This could mark the difference between AI that assists with specific tasks and AI that can engage in genuinely creative and analytical thinking across virtually any domain.

Revolutionary Context: The Power of 1 Million Tokens

Perhaps even more significant than the parameter count is the prospect of a 1-million-token context window. To put this in perspective, current state-of-the-art models typically handle context windows of 32,000 to 128,000 tokens. A million-token context would represent an 8–10x increase in the amount of information an AI system could process and maintain awareness of during a single conversation or task.

This expanded context capability would be transformative across multiple dimensions. For content creation and analysis, it would mean the ability to process entire books, comprehensive research papers, or extensive codebases in a single session. Legal professionals could upload complete contracts or case files for analysis. Researchers could feed entire datasets and receive comprehensive insights without the traditional limitations of context truncation.

The 1-million-token context would also enable more sophisticated long-form reasoning and planning. Current AI systems often struggle to maintain coherence and consistency across extended interactions due to context limitations. With a million-token window, AI could maintain detailed awareness of complex project requirements, user preferences, and ongoing conversation threads across much longer timeframes.

From a practical standpoint, this expanded context could eliminate many of the current friction points in AI interaction. Users wouldn’t need to repeatedly re-establish context, summarize previous interactions, or work around artificial constraints on input length. The AI would simply “remember” and process vastly more information, leading to more natural, efficient, and productive interactions.

Open Source Revolution: The MIT License Advantage

Perhaps the most democratizing aspect of this hypothetical 2026 breakthrough would be its release under an MIT license. The choice of licensing model for AI systems has profound implications for innovation, accessibility, and the pace of technological development. An MIT license would represent a commitment to open, unrestricted access that could accelerate AI advancement across the global technology ecosystem.

The MIT license is known for its permissive nature, allowing for both commercial and non-commercial use with minimal restrictions. For a 1.6-trillion-parameter AI model, this would mean that researchers, startups, enterprises, and individual developers could all access and build upon the same foundational technology. This stands in stark contrast to the proprietary models that currently dominate the AI landscape, where access is controlled and often expensive.

Such open access could spark an unprecedented wave of innovation. Small research teams and startups, previously unable to compete due to the massive computational and financial resources required to train large language models, would suddenly have access to cutting-edge AI capabilities. This democratization could lead to breakthrough applications in fields ranging from scientific research and education to creative arts and social impact initiatives.

Moreover, an open-source approach would accelerate the pace of AI safety research and improvement. With transparent access to model weights, architectures, and training methodologies, the global research community could collaborate on identifying and addressing potential risks, biases, and limitations more effectively than is possible with closed systems.

Looking Toward 2026: Implications and Possibilities

The convergence of massive scale, extended context, and open access represents more than just incremental improvement. It suggests a shift in who gets to build with AI, what kinds of problems AI can tackle in one sitting, and how quickly new applications can emerge.

.png)

.svg)